SAI project

In recent years Deep Learning (DL) tools have become widespread and powerful, basically because they actually started working sometime between 2006, when Hinton et al. found a better way to initialize weights, and 2012, when AlexNet won ILSVRC destroying competitors. After that everyone started applying DL to different tasks, often with unprecedented leap of results and performances.

One of the most important applications was DeepMind's AlphaGo, which even made the cover of Nature in 2016. This is a Deep Reinforcement Learning computer agent that plays the ancient board game Go at superhuman level, a task that was deemed to be impossible to achieve with previous technology, and that was set as a fundamental milestone in the development of AI since the time of Richard Feynman (p. 111).

Reinforcement Learning (RL) is a collection of iterative algorithms that (should) converge to an optimal agent, for a given problem. The problem is typically formalized as a Markov Decision Problem (MDP) with rewards. Perfect information, zero-sum combinatorial games are a typical deterministic example, and the board game of Go is often considered the ultimate benchmark, because of its gargantuan state space.

Pure RL approaches require to start from a random agent and play many episodes of the given MDP, improving the agent continuously by exploiting information obtained by the previous experience. AlphaGo was not a pure RL approach, as it used also information from human games and had all kind of tweaks to ensure that it could reliably perform under the pressure of public performances (see the beautiful documentary AlphaGo the Movie). Subsequent iterations of DeepMind's research hence focused on pure RL approaches with AlphaGo Zero (2017, Nature again), and on universality with AlphaZero, which efficiently developed also SOTA agents for the simpler games of chess and shogi (2018, Science).

Starting in 2016, I got really interested in the novelty and universality of this approach. DeepMind is paving the way of major AI breakthroughs since then (with the most recent success being AlphaFold), but it is a private company, not a research institute. And while it may have the strongest minds at work and the most incredible resources at hand, it probably lacks the interest for understanding and explaining every theoretical aspect of their discoveries. This creates opportunities for researchers worldwide to follow with a wide range of interesting scientific works that might even have a direct impact on state of the art research on AI.

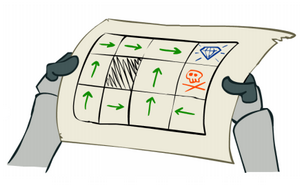

SAI (Sensible Artificial Intelligence, but also an obvious homage to one certain fictional player) is an improvement and generalization of AlphaZero (AZ). The neural network at the heart of AZ, returns a policy distribution and an estimate of the value, which is the probability of winning. The neural network of SAI instead estimates the value as a two-parameters sigmoid function, which represents the probability of winning as a function of bonus points given to the current player.

In this way, the agent of SAI is aware of the score of the game, while AZ is only aware of being winning or losing. Hence it becomes possible to program the agent to try to win without playing suboptimal moves (moves that still win but lose points without need).

The playing behaviour of the program gets an obvious benefit from this idea, but more importantly it is an interesting question whether it is even possible to train a network to predict two parameters with only one result per data point. It turns out that it is enough to include a random branching mechanism in games to make the training learn the two parameters efficiently.

SAI project started as a fork of Leela Zero, an open source clean room implementation of AlphaGo Zero with distributed self-plays. There are a GitHub repository and a server that collects games and shows the progress.

The first implementation and tests were performed on the small 7x7 board. In the paper [13] SAI framework is presented and explained, including results of training for this very small board. There is the introduction of a flexible agent algorithm that allows to exploit the information on the score to improve game choices. The entire training of a neural network on the 7x7 board, including self-play games, takes just few days on a single workstation with a medium level GPU and reaches almost perfect play on such a small game.

Subsequently we performed three training experiments on the much more complex 9x9 board. The results are presented in the paper [14], along with several improvements, and comparison with strong human players. The entire training takes a few months on a single workstation with a medium level GPU and reaches super-human level, but not perfect play.

After that, we are training SAI on the full 19x19 board. This takes years as a distributed effort, with the help of heavy computational resources of our contributors.

The next paper will include important novelties in the statistical inference done by SAI's agent and in the neural network structure, together with lesson learnt from the larger training experiment.

There are then several research directions that were motivated or inspired by our work on SAI. These are yet to be developed before becoming publications, but they look very promising and interesting, and in some cases really beautiful (from a mathematical point of view).

| [13] | SAI a Sensible Artificial Intelligence that plays Go | Morandin, Amato, Gini, Metta, Parton, Pascutto | IJCNN | 2019 |

|

|

| [14] | SAI: a Sensible Artificial Intelligence that plays with handicap and targets high scores in 9×9 Go | Morandin, Amato, Fantozzi, Gini, Metta, Parton | ECAI | 2020 |

|

|